Novel view synthesis from limited observations remains an important and persistent task. However, high efficiency in existing NeRF-based few-shot view synthesis is often compromised to obtain an accurate 3D representation. To address this challenge, we propose a Few-Shot view synthesis framework based on 3D Gaussian Splatting that enables real-time and photo-realistic view synthesis with as few as three training views. The proposed method, dubbed FSGS, handles the extremely sparse initialized SfM points with a thoughtfully designed Gaussian Unpooling process. Our method iteratively distributes new Gaussians around the most representative locations, subsequently infilling local details in vacant areas. We also integrate a large-scale pre-trained monocular depth estimator within the Gaussians optimization process, leveraging online augmented views to guide the geometric optimization towards an optimal solution. Starting from sparse points observed from limited input viewpoints, our FSGS can accurately grow into unseen regions, comprehensively covering the scene and boosting the rendering quality of novel views. Overall, FSGS achieves state-of-the-art performance in both accuracy and rendering efficiency across diverse datasets, including LLFF, Mip-NeRF360, and Blender. Code will be made available.

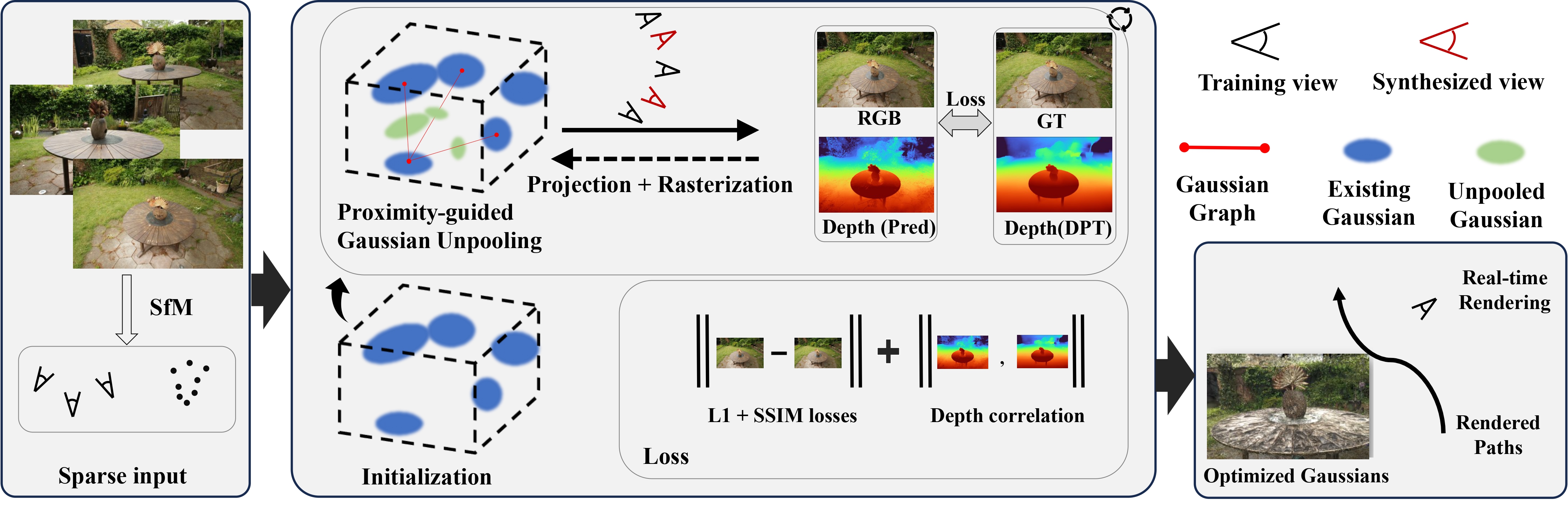

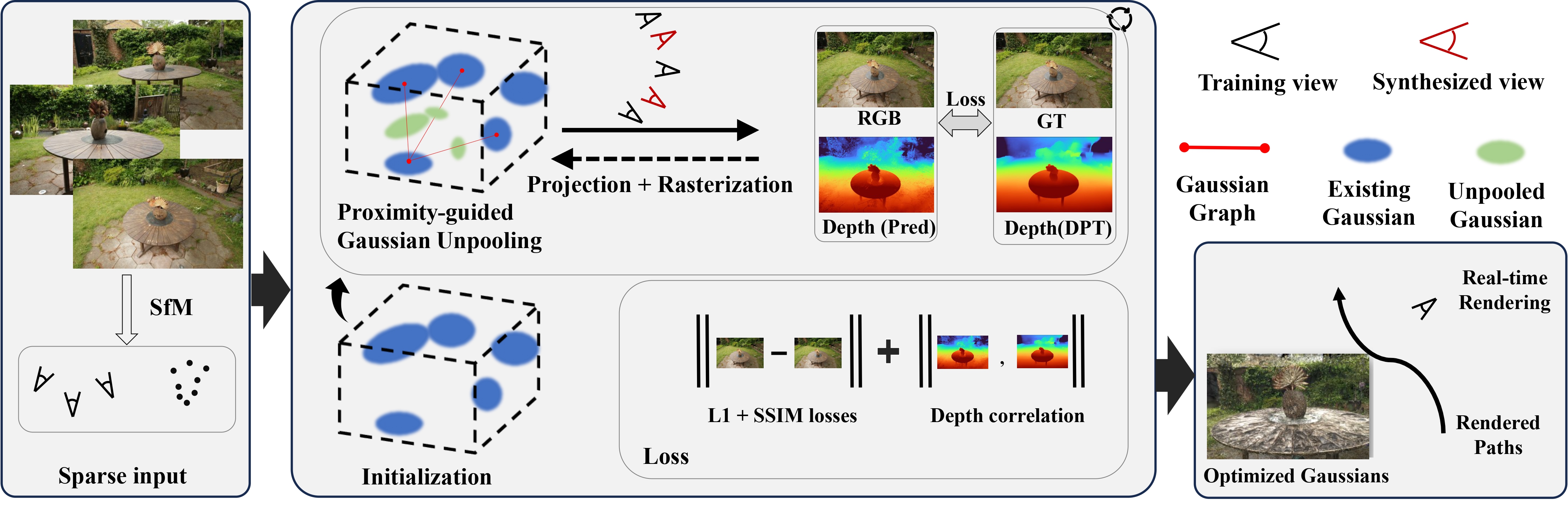

FSGS are initialized from SfM, with a few images (black cameras). For the sparsely placed Gaussians, we propose densifying new Gaussians to enhance scene coverage by unpooling existing Gaussians into new ones, with properly initialized Gaussian attributes. Monocular depth priors, enhanced by sampling unobserved views (red cameras), guide the optimization of grown Gaussians towards a reasonable geometry. The final loss consists of a photometric loss term, and a geometric regularization term calculated as depth correlation.

We demonstrates the visual improvement of FSGS compared with FreeNeRF and SparseNeRF in both the forward-scene and 360 degree dataset. We can observe that NeRF-based methods generate floaters and lead to aliasing results due to limited observation Our proposed FSGS enforces more consistent and solid surfaces with geometric coherence.

On LLFF dataset, we visualize results from 8 different scenes trained with 3, 6, 9 views repectively. FSGS produces pleasing appearances while demonstrating detailed thin structures.

Below, we visualize 8 tasks across 3 domains that we consider.

@misc{zhu2023FSGS,

title={FSGS: Real-Time Few-Shot View Synthesis using Gaussian Splatting},

author={Zehao Zhu and Zhiwen Fan and Yifan Jiang and Zhangyang Wang},

year={2023},

eprint={2312.00451},

archivePrefix={arXiv},

primaryClass={cs.CV}

}